Further adventures with LLM curve-guessing

The capacity of LLMs to solve numerical approximation problems is more or less known. For example, Merler1, et al, looked into using Llama 3 8B for symbolic regression through what the authors call “In-Context Symbolic Regression”. Vacareanu2, et al, did an in-depth study of what multiple LLMs can do in linear and non-linear regression (a lot, it turns out). The appendix of this work is especially interesting, with multiple examples of LLMs rationalizing their choices. Nafar, A., Venable, K.B. and Kordjamshidi,3 looked into in-context learning through a study of regression.

Imagine a game: you are given a few points , where is a secret smooth function and is within the interval . Your task? To deduce the function and approximate it with Python code.

I got curious about this game (and the capacity of LLMs to play it, see also the “LLMs and cheatsheets” post) and so I picked as a secret function, a few LLMs from lmarena, and used the following prompt:

You will be given a set of points (x, f(x)) that are sampled from an 1D secret real function. The points x are sampled uniformly from the interval [-5,5]. All points sampled use limited precision, so you may assume that the points are not exact, but they are close to the actual values of the secret function.

# Instructions

1. Your first task is to analyze these points. Print all your reasoning and think systematically.

- Think about the characteristics of the function based on the points provided.

- You may assume that the secret function is continuous.

- You can use any simple mathematical form you think is appropriate to approximate the function.

- Make a guess about the form of the function based on the points provided and iteratively refine the guess based on the points.

- Remember to print the reasoning.

2. Your second task is to generate a python function that approximates the secret function.

# Rules for the python function:

- The function should take a single float as input and return a float as output.

- You may assume that we have already imported the numpy library as np.

- You should guess on a parametric form on your own, do not use any curve-fitting capabilities of python or numpy.

- Only return the function definition, without any additional text or comments.

- The function should be returned as a string, and it should be valid Python code.

Points:

[(-5.0, -4.795), (-4.796, -4.779), (-4.592, -4.559), (-4.388, -4.159), (-4.184, -3.612), (-3.98, -2.958), (-3.776, -2.236), (-3.571, -1.488), (-3.367, -0.754), (-3.163, -0.069), (-2.959, 0.537), (-2.755, 1.039), (-2.551, 1.421), (-2.347, 1.675), (-2.143, 1.802), (-1.939, 1.809), (-1.735, 1.711), (-1.531, 1.529), (-1.327, 1.287), (-1.122, 1.012), (-0.918, 0.73), (-0.714, 0.468), (-0.51, 0.249), (-0.306, 0.092), (-0.102, 0.01), (0.102, 0.01), (0.306, 0.092), (0.51, 0.249), (0.714, 0.468), (0.918, 0.73), (1.122, 1.012), (1.327, 1.287), (1.531, 1.529), (1.735, 1.711), (1.939, 1.809), (2.143, 1.802), (2.347, 1.675), (2.551, 1.421), (2.755, 1.039), (2.959, 0.537), (3.163, -0.069), (3.367, -0.754), (3.571, -1.488), (3.776, -2.236), (3.98, -2.958), (4.184, -3.612), (4.388, -4.159), (4.592, -4.559), (4.796, -4.779), (5.0, -4.795)]

The following considerations guided the design of this prompt:

- I specifically instructed the LLMs not to use Python's built-in curve-fitting functions (like

numpy.polyfit). The goal was to see if they could approximate the function using their own knowledge. - Initially, some LLMs would output the function directly, without showing their reasoning. I adjusted the prompt to encourage them to explain their thought process.

- Finally, for simplicity, all values were rounded to three decimal places.

How would I approach this?

If I was tackling this problem myself, I'd start by sketching a curve. (50 points is probably overkill in this interval!). From there, I'd look for patterns and try to guess the underlying function.

Experiment

I only generated one approximation per model to keep things fair. However, a few of the strongest models, like o3 and Claude, were able to figure out the form of the hidden function when asked to “think again”.

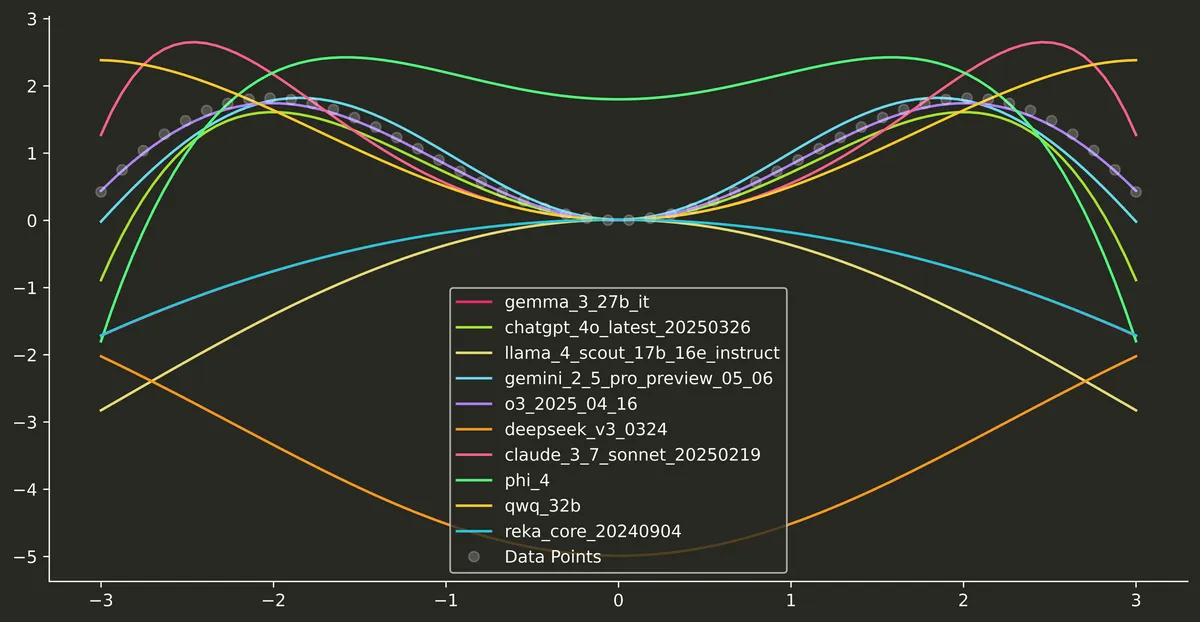

Here’s a view of the results from all the models.

Promising! Some of the strongest LLMs picked good models of the behavior (two peaks, agreement at 0). Almost all the models picked functions that satisfy .

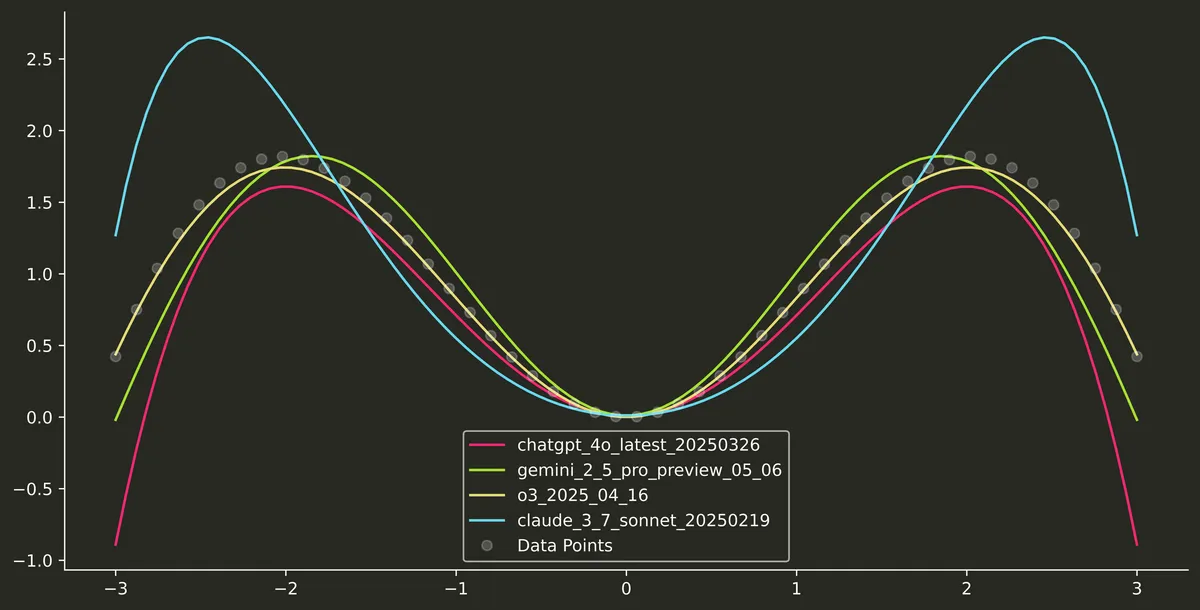

We can zoom in on the best solutions a bit more (and also note that they are generated by the "usual suspects", e.g., gemini-2.5-pro, etc.).

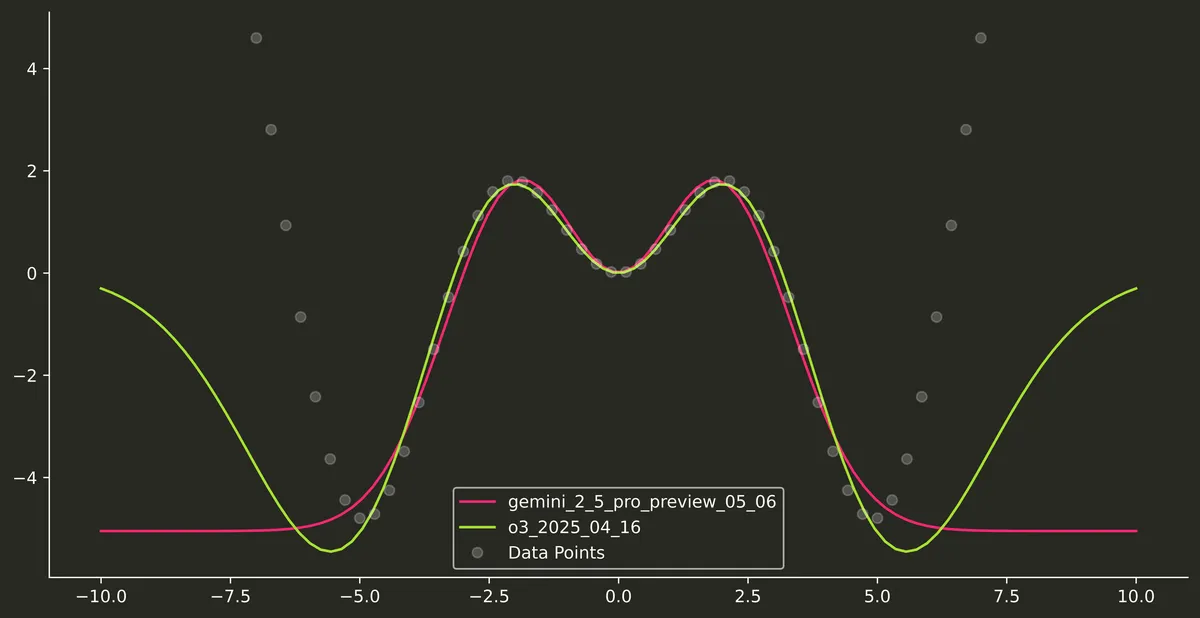

o3 had the best (and perhaps most creative?!) approximation:

Interestingly, o3 and gemini-2.5 produced the only approximations that remain bounded as the absolute value of increases. Since the LLMs cannot see outside , we don’t give any points for better behavior outside that interval.

Still, it’s nice that the o3 solution is well-behaved.

To be continued …

A few more results

o3 produced the best approximation. Here’s the reasoning it generated:

## Observations & Reasoning

1. Symmetry

• Points for +x and –x are identical ⇒ the function is _even_: f(–x)=f(x).

• Therefore the function should depend on x².

2. Zero‐crossings

• f(0) ≈ 0.

• f(x) changes sign again near |x| ≈ 3.16.

• So a convenient factor is (1 – x²/10); it vanishes at x≈±√10≈3.16.

3. Overall shape

• Between the two zeros (|x|<3.16) the function is positive and peaks near |x|≈2.

• Outside that interval it becomes negative, reaching about –4.8 at x=±5.

• A simple quartic of the form k·x²(1–x²/10) matches the sign pattern, but its tails decay too slowly (≈ –30 at x=5 for any k that fits the peak).

4. Taming the tails

• Multiplying by an exponential damping term e^{–βx²} keeps behaviour near the origin almost intact but reduces the magnitude out in the tails.

• Choosing β≈0.08 brings f(±5) down to roughly –5 while leaving the peak height ≈1.8.

5. Final heuristic model

f(x) = x² · (1 – x²/10) · exp(–0.08x²)

• Zeros at x=0 and x≈±3.16 ✅

• Peak ≈1.8 at |x|≈1.9–2.1 ✅

• f(±5) ≈ –5 (matches –4.8 within visible noise) ✅

That single analytic expression captures the main qualitative and quantitative features of the sampled data without any numerical curve-fitting.

All approximations generated:

def gemma_3_27b_it(x):

return -0.1922 * x**2 + 0.01

def chatgpt_4o_latest_20250326(x):

return -0.1 * x**4 + 0.8 * x**2 + 0.01

def llama_4_scout_17b_16e_instruct(x):

return -4.795 + 4.805 * (1 - (x/5)**2)**2

def gemini_2_5_pro_preview_05_06(x):

A = 6.7227

gamma = 0.26

x0 = 1.939

C_offset = -5.048

term1 = np.exp(-gamma * (x - x0)**2)

term2 = np.exp(-gamma * (x + x0)**2)

return A * (term1 + term2) + C_offset

def o3_2025_04_16(x):

return x**2 * (1 - x**2/10) * np.exp(-0.08 * x**2)

def deepseek_v3_0324(x):

return 0.01 - 5*np.exp(-0.1*x**2)

def claude_3_7_sonnet_20250219(x):

return -0.01 * x**6 + 0.05 * x**4 + 0.5 * x**2 + 0.01

def phi_4(x):

a = -0.1

b = 0.5

c = 1.8

return a * x**4 + b * x**2 + c

def qwq_32b(x):

return -0.0286 * x**4 + 0.521 * x**2 + 0.01

def reka_core_20240904(x):

return -0.1914 * x**2 + 0.01

def mixtral_8x7b_instruct_v0_1(x):

return 0.000125*x**6 - 0.3125*x**4 + 4.779*x**2

Merler, M., Haitsiukevich, K., Dainese, N. and Marttinen, P., 2024. In-context symbolic regression: Leveraging large language models for function discovery. arXiv preprint arXiv:2404.19094.↩

Vacareanu, R., Negru, V.A., Suciu, V. and Surdeanu, M., 2024. From words to numbers: Your large language model is secretly a capable regressor when given in-context examples. arXiv preprint arXiv:2404.07544.↩

Nafar, A., Venable, K.B. and Kordjamshidi, P., 2024. Learning vs Retrieval: The Role of In-Context Examples in Regression with LLMs. arXiv preprint arXiv:2409.04318.↩