Revisiting a Root-Finding Idea from Undergrad

I recently recovered some notes from undergrad, when I was taking numerical analysis for the first time.

Most people know of Newton's method: given a well-behaved function and some initial point , we define the sequence

and under good conditions, this sequence converges quadratically (read: super fast) to a root of , . We will assume at least one such root exists.

While taking a break from homework, I found myself sketching continuous functions and wondering if I could invent a sequence that would find roots by following a geometric process, rather than by writing down a formula for the sequence.

Chasing roots with circles?!

Here's what I tried: start with a function , well-behaved, e.g., or better, and assume it has a root. We take a random point and then draw the circle with radius and center . Next, we consider the points where this circle intersects the graph of . This is equivalent to finding all such that

So far so good, but why should this equation even have roots? Well, either the entire graph of is in the circle or it isn't.

- If it isn't, then there are intersections with the circle, so roots exist.

- If is contained in the circle, then it cannot have its root at (as then the circle of radius would just be a point and thus it cannot contain the whole graph of a continuous function1). If the root is not at , then there must be some other such that is contained in the circle, but this is also impossible: the circle constraints all possible values of to be positive, otherwise there would be a part of the graph outside of the circle and the intermediate value theorem does the rest.

And so we have shown that if is continuous and has a root somewhere, then it must intersect such circles to get to the root. The idea from here was:

- Find the set

- Select (assuming is finite for all , e.g., if is analytic, I believe this follows because its roots have to be isolated).

- Repeat with to get to , etc.

This is where I stopped the analysis as an undergrad. The equation leads to harder problems than just solving . For example, if is your target, the circle equation is a quartic polynomial!

What appealed to me was that, when sketching the sequence on paper, you could see it taking large steps when far from the root and gradually slowing down as it approached closer.

Can we salvage this idea and show it converges?

There's a simple way to make a method out of this madness: we will replace with a tangent approximation! First,

and then

So at each step we compute the two values

and then .

Informal convergence proof

Let's now show (a bit informally) that this converges! Close to a root , and , so

where . So finally

where , which means we have linear convergence when we are sufficiently close to the root! In fact, the bigger the slope of at the root, the faster this method converges (when close to the root)!

Getting stuck

Far from the root, problems arise because this algorithm targets minima instead of roots. When we are close to a minimum, say , at which point the possible iterates are

Both move away from the minimum, so the algorithm will pick whichever has the smallest distance from it.

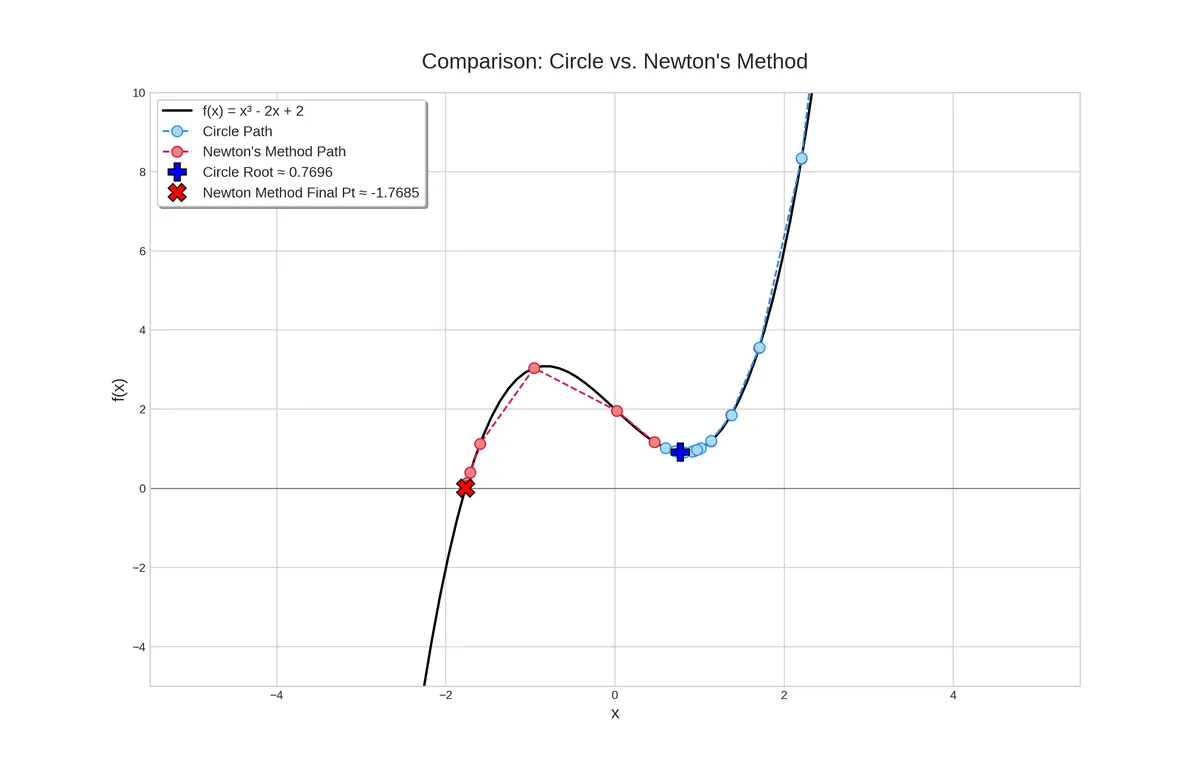

You can see this behavior here:

Because of the slope, in the beginning the two methods do similar steps, but eventually we get stuck.

Is this a practical method?

Not really: it requires four evaluations of per iteration: , , then and . Compared to Newton, Secant, Brent, etc., it seems like we get slower convergence for more work.

Also, because of its greedy nature, it can get stuck in local minima.

However, this method converges quickly when the slope at the root is steep, and slowly when it is shallow.

Footnotes

Technically, a function defined only at a single point is continuous, but you know the kind of functions we’re talking about here. Also, if the root is at , we’re done and don’t need to take another step.↩