The Smallest Weight Punches Above Its Weight (in Expectation)

I saw this nice bound on Twitter; it's proved as part of this work.

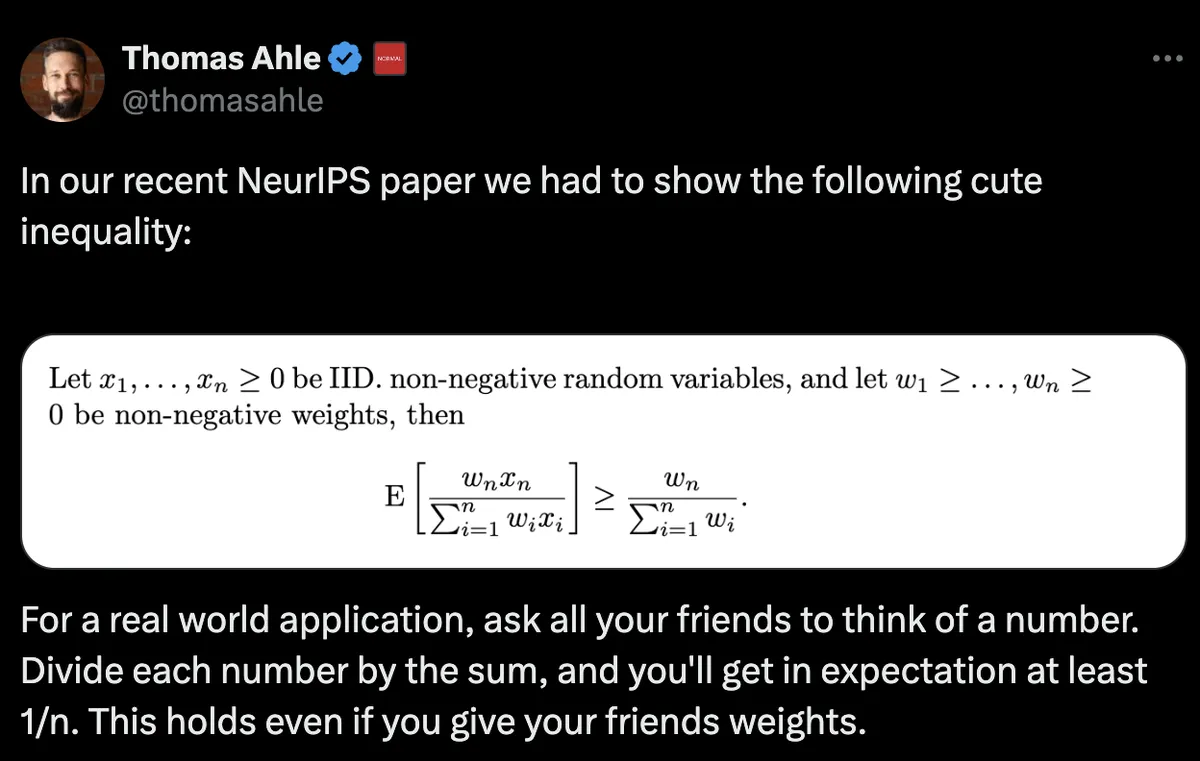

Assuming:

- are IID (independent and identically distributed) non-negative random variables and

- are non-negative weights,

we want to show that for the smallest of the 's, we have:

It is necessary that at least one of and if then there's nothing to prove, so let's assume that (this also means that all ). It is also necessary that not all of are 0 at the same time (an unlikely event as they are independent).

We can rewrite the bound as follows. First set , . Then, we want to prove:

A simpler result

There's a similar result that is a bit easier to show. First, set , then we can argue that the distribution of does not depend on (this is called "symmetrization"), so they should all have the same expected value, let's say . From this, we have that and so

A search for a proof

We can't use symmetrization immediately with the weighted version, the variables are not identically distributed anymore.

Here's an idea, though. Let us first define the shorthand and . Then, from linearity of expectation, we have:

As a side effect, , so if we show , then we are done!

Instead of going for a clever two-step-Jensen proof (like the authors of the paper), I'll attempt to show this with calculus. 🤔 This assumes more stuff, but it's fine, this isn't math class.

Proof

For , (from the ordering of the ) and we want to show that Let’s be adventurous and take a derivative with respect to while holding for constant. Then because of , we get that and

so the difference is increasing as is increasing (and is decreasing, to compensate)1. We just need to find the minimum of . We can bound this minimum from below by setting and to be equal to the middle point (as we are decreasing , this also decreases the difference )2.

But now and have the same weight , so by symmetrization the lower bound is 0, i.e.,

So, we have shown that are increasing with , so is the maximum, which implies , and we are done!

To justify swapping derivative and expectation, we bound the term . It can be shown that , relying on and the condition (for ). This inequality implies . Since , is bounded by the finite constant , allowing the interchange.↩

Remember that according to the original assumptions, so setting the two equal is the smallest allowed value for .↩